Minimal-shot autonomy

Minimal-shot autonomy concerns the question of how a system survives in unfamiliar environments. The commonly accepted response is to collect more data and retrain. We know this answer works, but it's impractical. It does not scale across the long tail of conditions a deployed vehicle, robot, or drone may encounter in the real world. Snow, dust, smoke, washouts and variable human infrastructure are obvious examples. A perception system that depends on having been trained on each new condition will not only be expensive and cumbersome to develop, but will necessarily lag every condition it has not yet encountered.

The protagonist of this project is the humble snow-clearing vehicle, dedicated to doing a task ripe for automation. Maintaining a workforce of trained drivers for engagement during only a small subset of the year, who must deploy at very short notice, is inefficient and expensive. More importantly, snow-clearing is a geographically bounded, repetitive and speed-limited operation over roads whose geometry is already known. Unlike open-ended urban driving, the operational objective is narrow: keep the vehicle aligned with a known road corridor while avoiding people, vehicles, kerbs, signs, parked cars and other obstacles. This makes the snow plough a particularly strong candidate for autonomy.

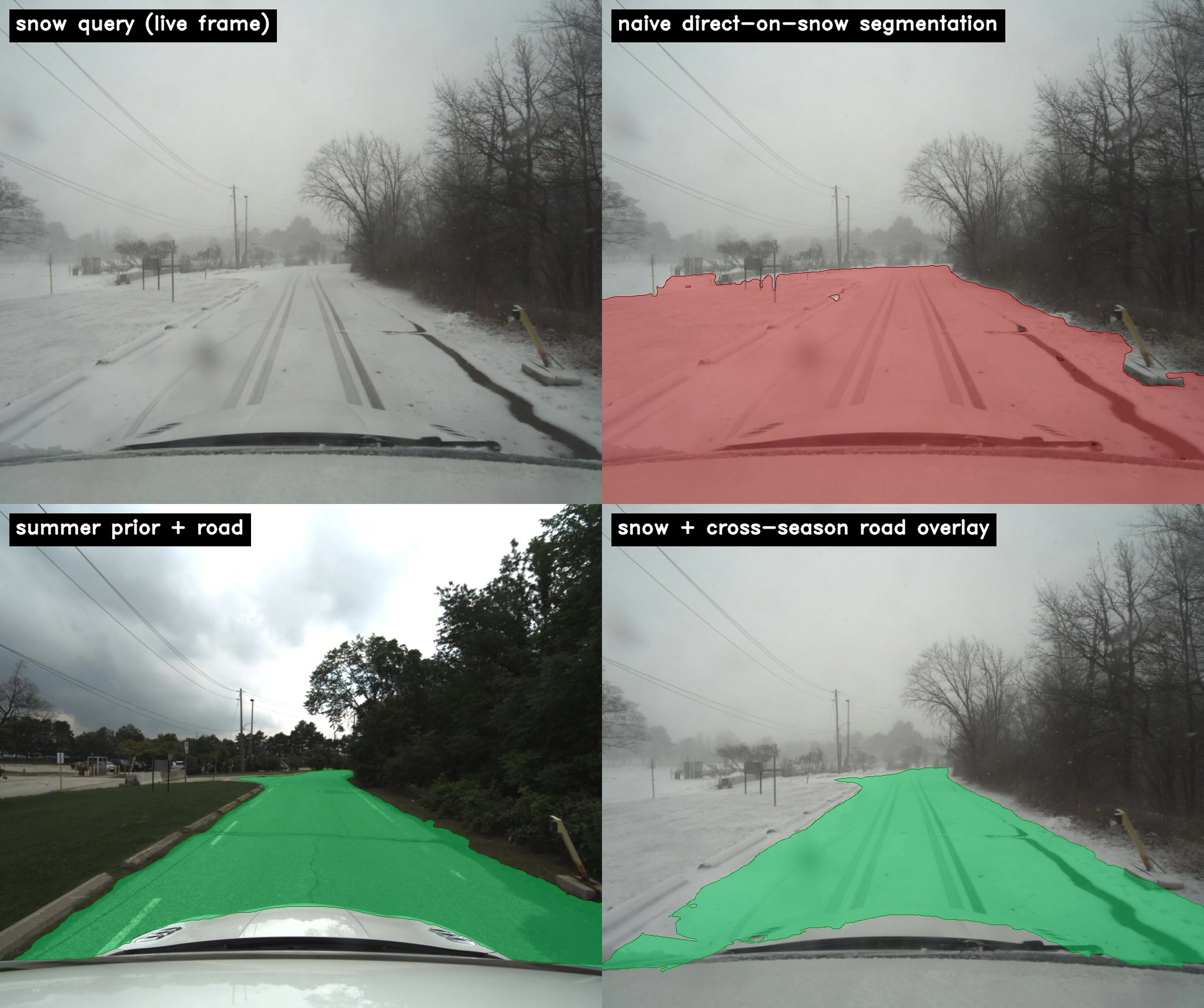

A snow plough's job is simple: sweep the road clear. The obvious difficulty is that, while the plough is in operation, the road is necessarily invisible. Curbs are buried, lane markings are gone, the boundary between tarmac and verge is no longer defined. A self-driving stack trained on traditional road conditions, applied directly to the plough's camera, will report with calibrated confidence that the entire scene is road and should be cleared. Approaching this problem via the traditional method of annotating a labelled snow dataset large enough to cover the long tail of road, weather, and time-of-day combinations is uneconomic and chronically incomplete. The alternative principle proposed in this project does not require new training data and is applicable across the broader concept of minimal-shot autonomy.

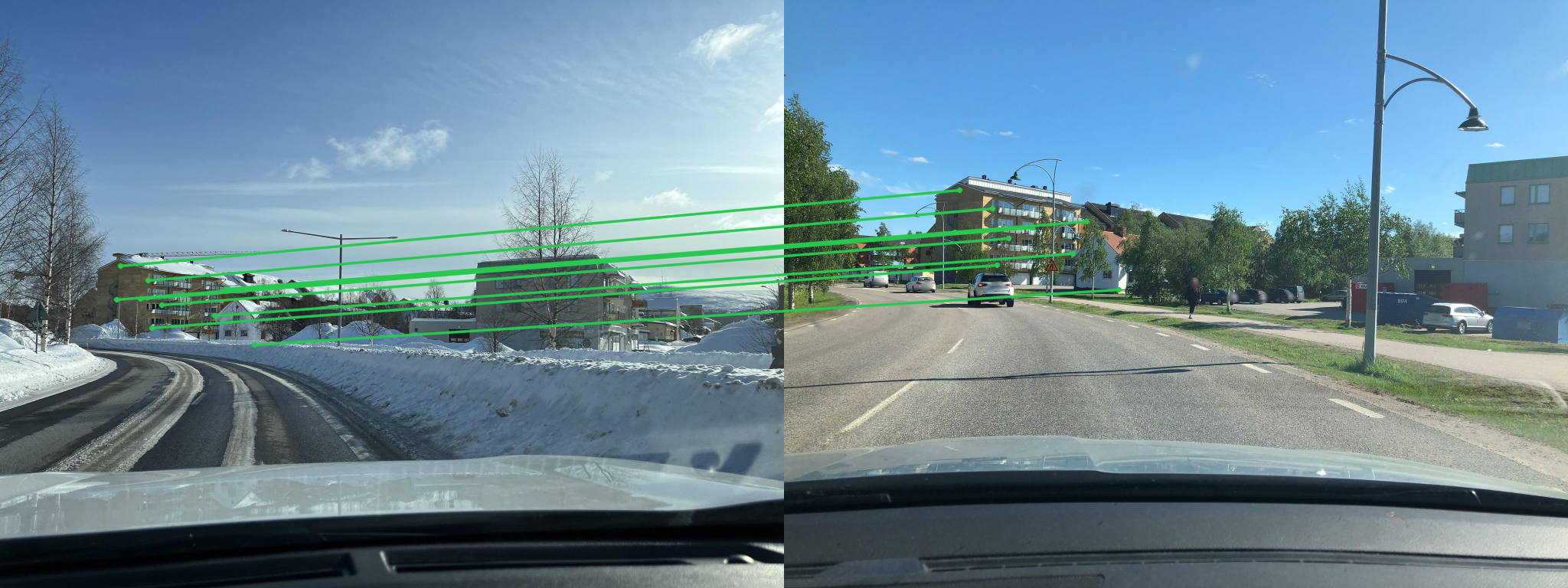

For almost every operating environment where autonomy fails for lack of data, an adjacent regime exists, temporally or seasonally or geographically, where data is plentiful and rich, and whose key components remain the same across environments. The road which needs to be ploughed this winter is the same road it was in the summer. Its appearance has changed, but its position in space and relative to local landmarks has not.

Snowseer is a canonical demonstration of leveraging training data via structural constants across environments. It transfers knowledge across regimes to achieve minimal-shot autonomy.